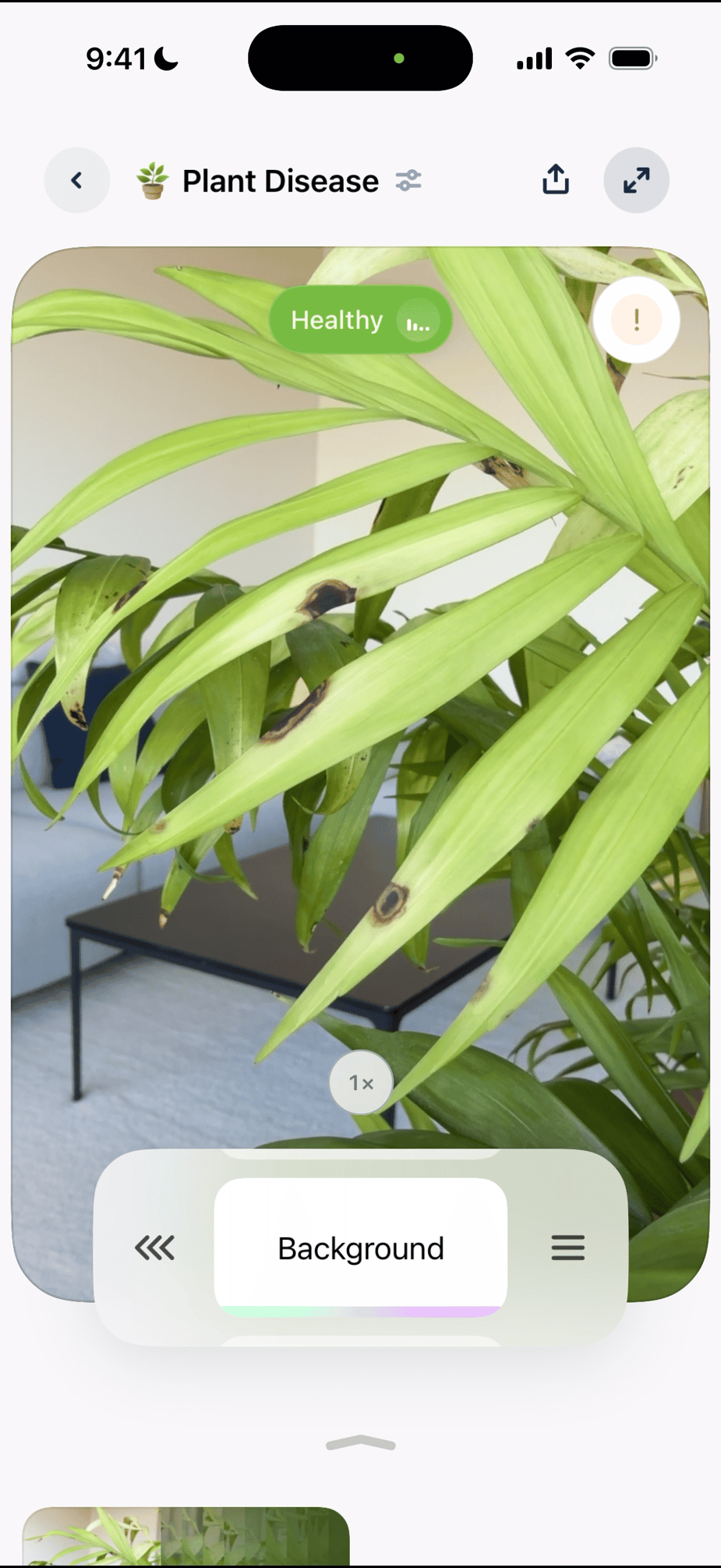

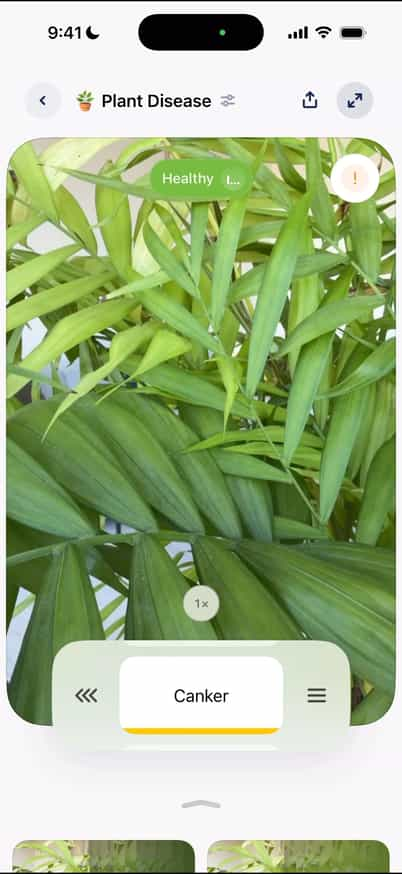

How it Works

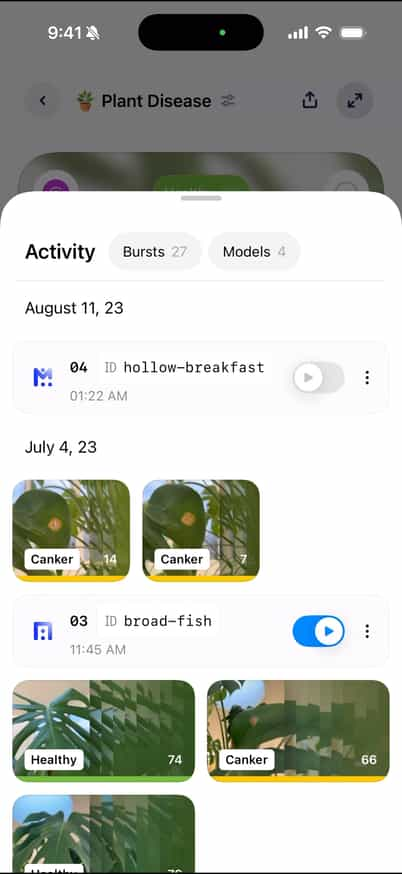

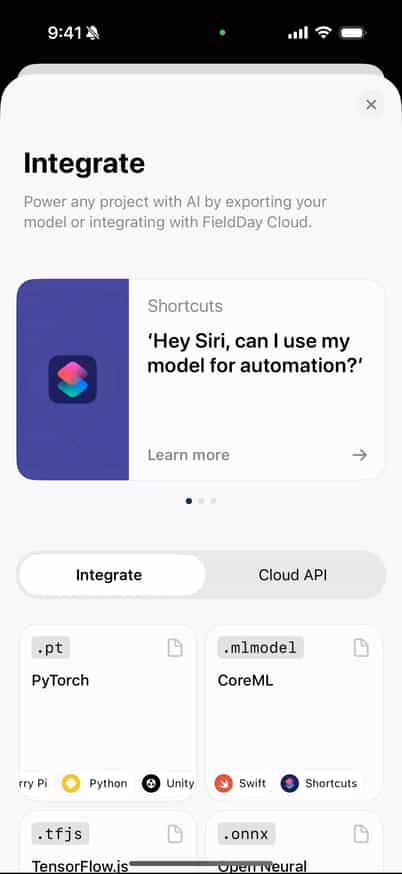

FieldDay is a mobile-first tool for building custom vision AI directly from your iPhone. It makes data collection fast and intuitive, allowing you to create labeled datasets in minutes by simply capturing images in the real world. By keeping this process on your phone and in the field, the app shortens the loop between collecting data, training models, and testing results.

Applications in Retail

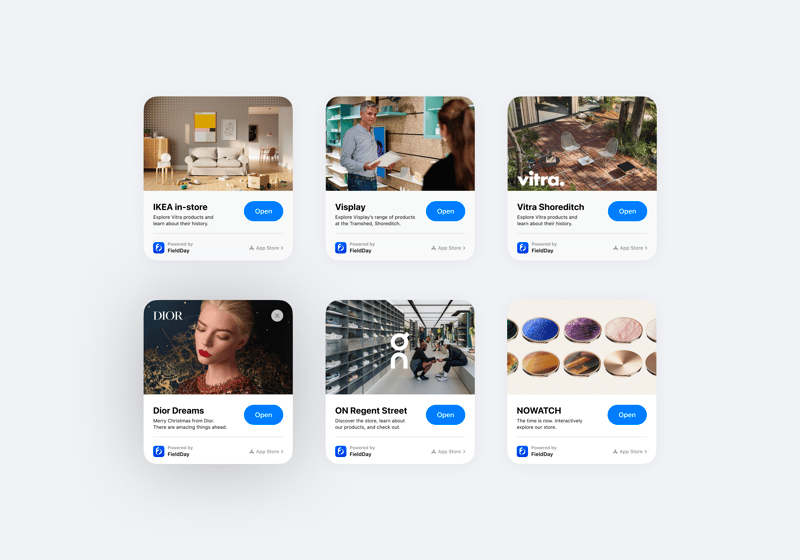

We also developed a retail-focused offering, working with brands like Vitra and McLaren to create interactive in-store experiences powered by lightweight, on-device models trained with FieldDay. Using App Clips, visitors could scan products or environments with their phone and receive a narrative-driven layer of information, guiding them through spaces, highlighting design details, and surfacing relevant context in real time. This enabled a more exploratory, self-directed shopping experience without requiring users to download a full app.

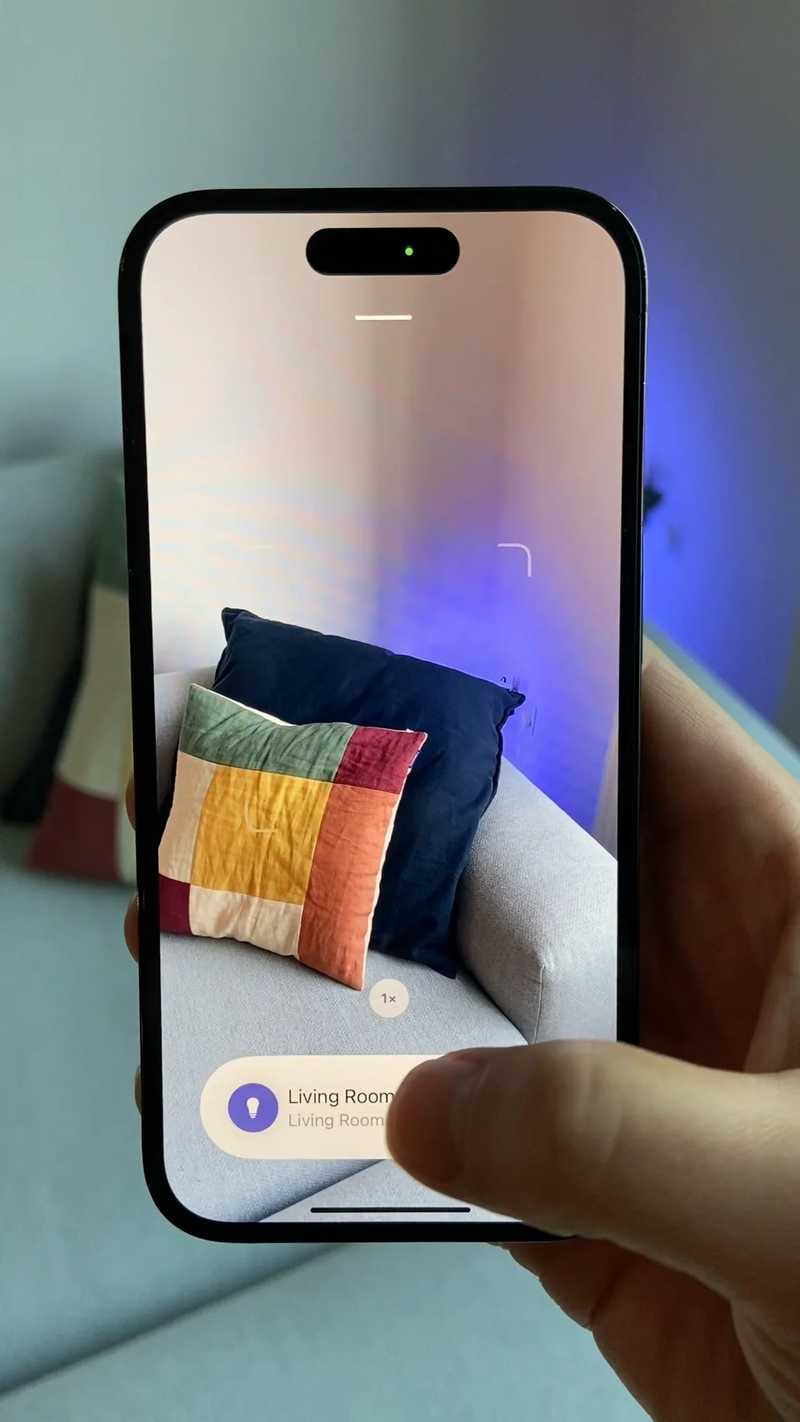

Using vision AI to control the home

We also explored how FieldDay models could extend beyond capture and into real-world control through a HomeKit integration. By using a trained classifier as a trigger, the app could recognize objects or spaces in the home and surface the relevant HomeKit controls directly in the camera view. For example, pointing the camera at a device, room, or object could automatically bring up controls for lights, speakers, or other smart devices associated with that context. This turned vision models into a lightweight interface for interacting with IoT systems, allowing users to control their environment simply by looking at it.